for test variant

for test variant

for test variant

Which campaign actually performs better?

The analysis is designed to go beyond clicks and evaluate whether the test variant improves downstream purchase behavior enough to justify the extra media investment.

60 daily rows across control and test

The dataset covers August 1 to August 30, 2019, with spend, impressions, reach, clicks, searches, content views, add to cart, and purchases for both variants.

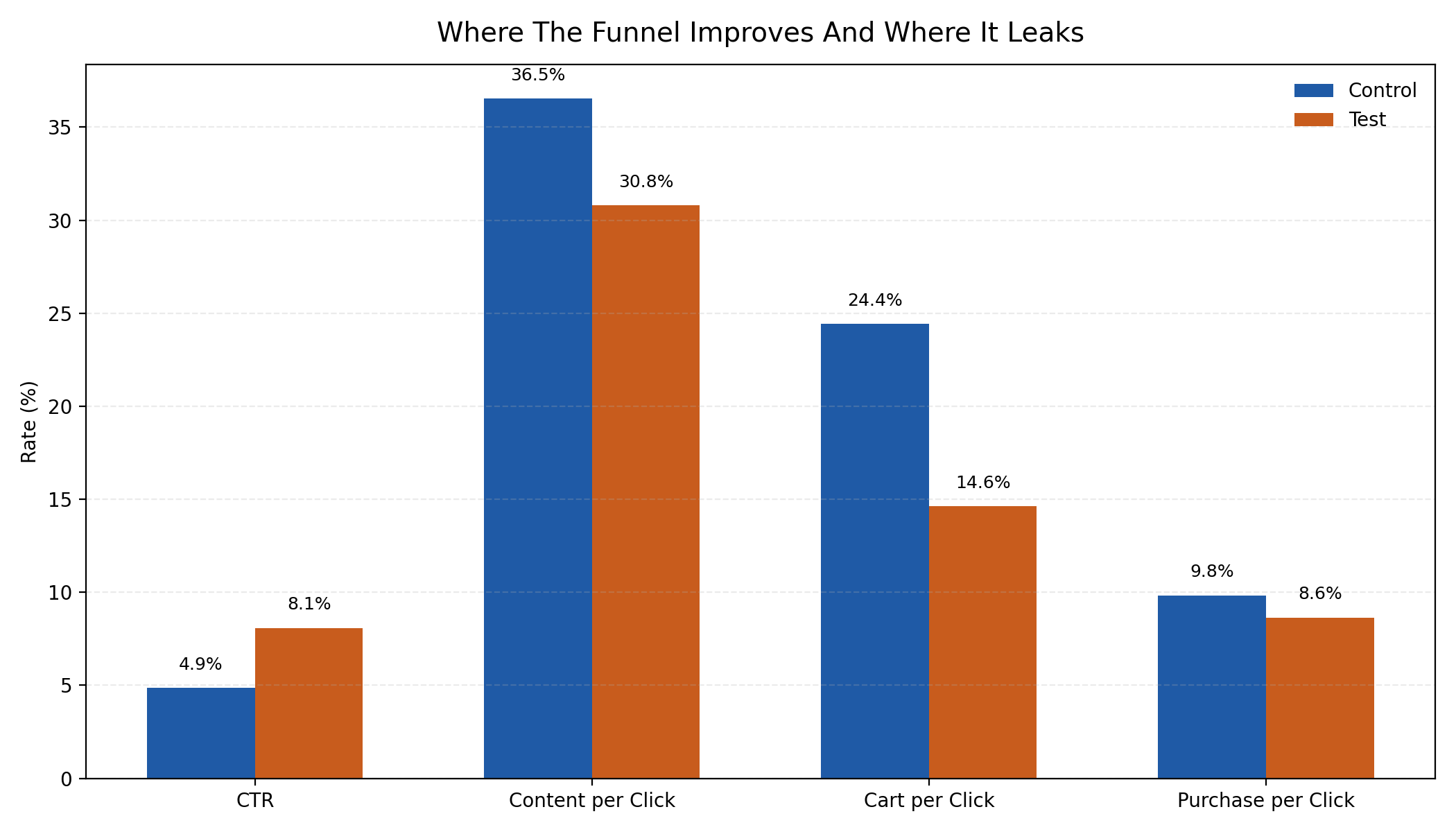

The variants win different parts of the funnel

The test campaign drives much stronger traffic efficiency, while the control campaign retains meaningfully stronger commercial intent deeper in the journey.

Why a single headline metric would be incomplete here

A campaign variant can look successful because it increases clicks, impressions, or other top-of-funnel metrics while simultaneously weakening downstream purchase behavior. In this dataset, that trade-off is exactly what needs to be tested.

This case study treats the campaign as a multi-stage funnel experiment. The analysis does not only compare average levels; it also examines how each variant changes the path from reach to clicks, from clicks to carting, and from traffic to purchases. That makes the recommendation more decision-ready than a one-metric summary.

| Project component | What was done |

|---|---|

| Data preparation | Column standardization, date parsing, derived rate metrics, cost metrics, and missing-value handling |

| Classical inference | Welch t-tests, Mann-Whitney tests, paired day-level tests, sign tests, and FDR correction |

| Uncertainty estimation | Bootstrap confidence intervals and permutation tests |

| Probability view | Bayesian posterior probability of one variant beating the other on key rates |

| Diagnostics | Outlier detection, weekday effects, cumulative curves, and correlation-shift analysis |

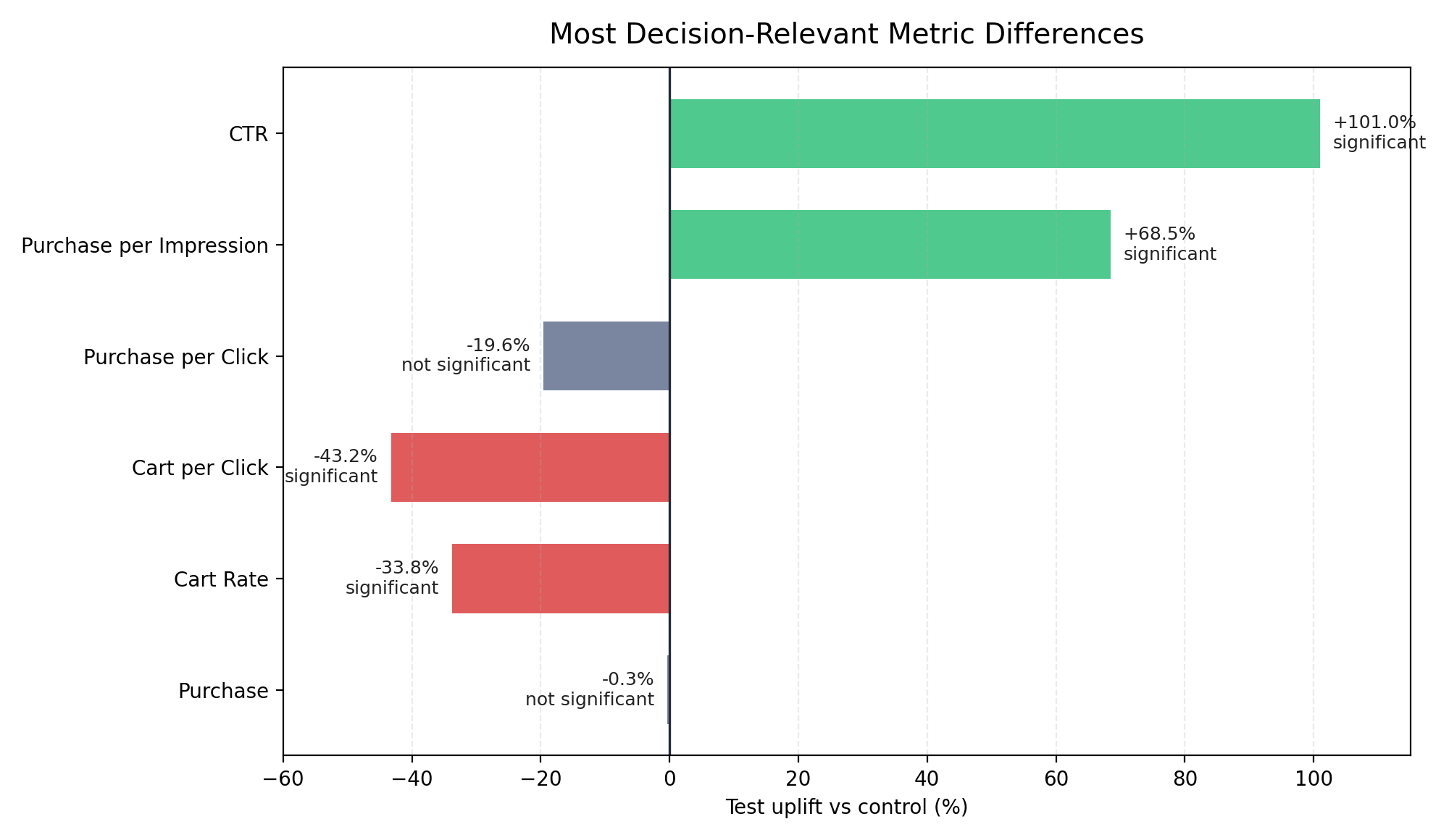

The test variant wins attention, but the control variant wins shopping intent

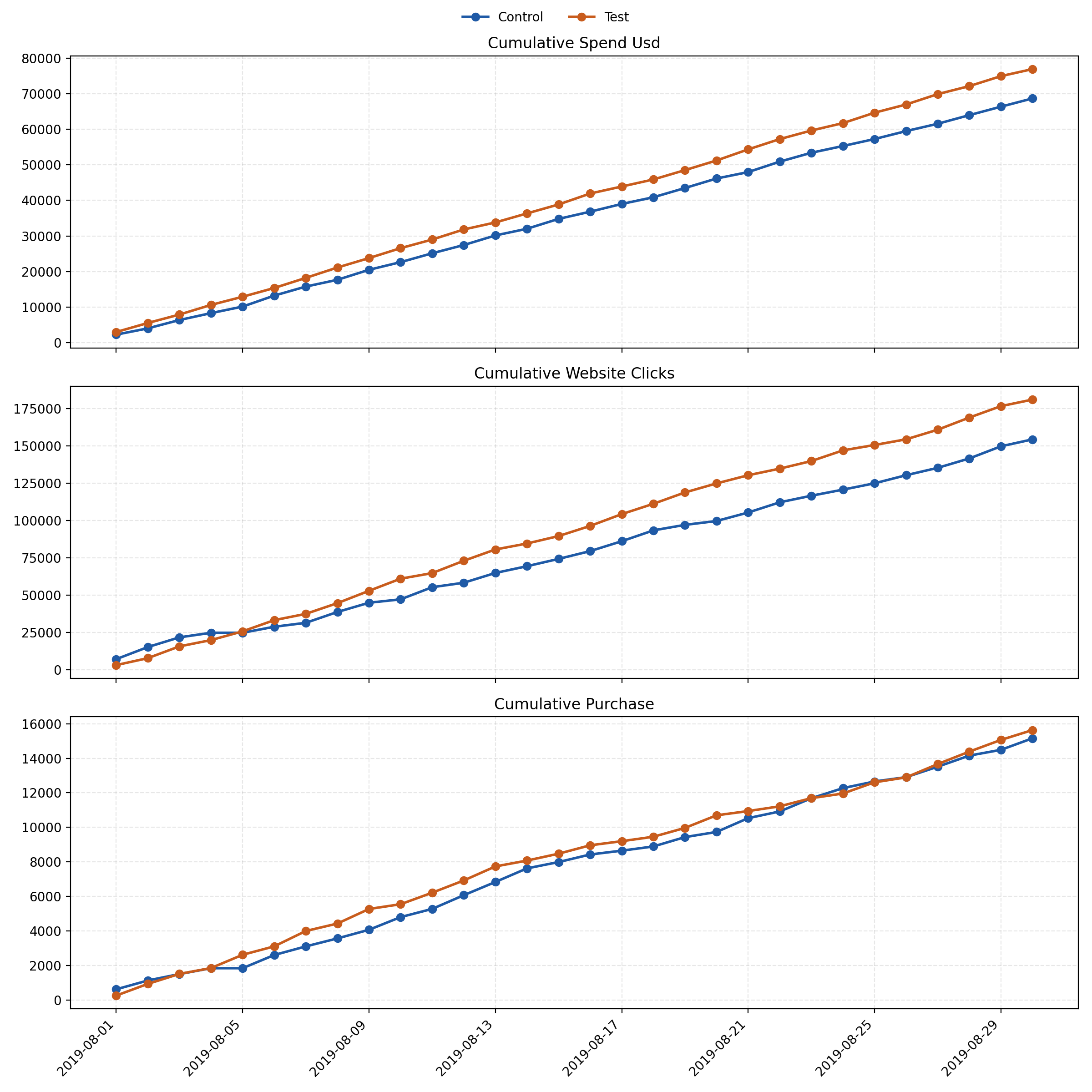

The strongest result is not a final purchase lift. The more important story is structural: the test variant is much better at generating traffic from impressions, while the control variant is materially stronger at turning that traffic into carts. Final purchases remain effectively unchanged because upper-funnel gains and mid-funnel losses offset one another.

Formal significance testing was performed for the main comparison set using Welch t-tests, Mann-Whitney tests, permutation tests, bootstrap intervals, paired calendar-day tests, and false-discovery-rate correction. In that framework, the purchase difference itself is not statistically significant, while CTR, purchase per impression, cart per click, and cart rate show much clearer separation.

The test roughly doubles CTR and significantly improves purchases per impression. It is clearly more effective at turning paid visibility into visits.

The control variant is far better at converting visits into carts. That points to stronger purchase intent, stronger message match, or a more qualified audience.

The test spends more and pays a much higher CPM. Higher traffic volume does not come for free, so incremental engagement needs to be judged against downstream efficiency.

This is not a clean end-to-end win for the test. A click-only read would miss the fact that the final purchase result is statistically flat.

Upper-funnel gains do not translate into a clear purchase lead

The first chart below focuses on funnel rates rather than raw volumes, making the trade-off easier to read. The test variant clearly improves CTR, but the control retains stronger cart formation and slightly stronger purchase efficiency per click.

The cumulative chart adds the time dimension. It shows that the test campaign keeps building a click advantage across the month, but the cumulative purchase lines stay close together. That visual pattern aligns with the formal tests: a clear traffic effect, but no reliable end-of-funnel purchase win.

Combining frequentist and Bayesian evidence

Multiple testing lenses are useful here because the dataset is small enough that any one method could be misleading on its own. Independent-sample comparisons, paired day-level tests, bootstrap intervals, permutation tests, and posterior probabilities all point in the same broad direction: the test improves attention efficiency, but not purchase quality per visit.

| Metric | Test mean | Control mean | Adjusted p-value | Interpretation |

|---|---|---|---|---|

| CTR | 10.24% | 5.10% | 0.0012 | Statistically supported lift in top-of-funnel engagement |

| Cart per click | 15.79% | 27.82% | 0.0013 | Control converts visits into shopping intent much better |

| Purchase per impression | 0.84% | 0.50% | 0.0052 | Test extracts more purchases from each impression |

| Purchase per click | 8.64% | 9.83% | 0.1977 | Directional control advantage, but not significant after correction |

| Purchase | 521.23 | 522.79 | 0.9760 | No evidence of a meaningful final-purchase difference |

Best concise conclusion: the test variant expands reach efficiently, but the control variant attracts users with stronger buying intent. If the objective is traffic, the test is attractive. If the objective is downstream conversion quality, the control remains safer.

Going beyond averages to understand why the result happens

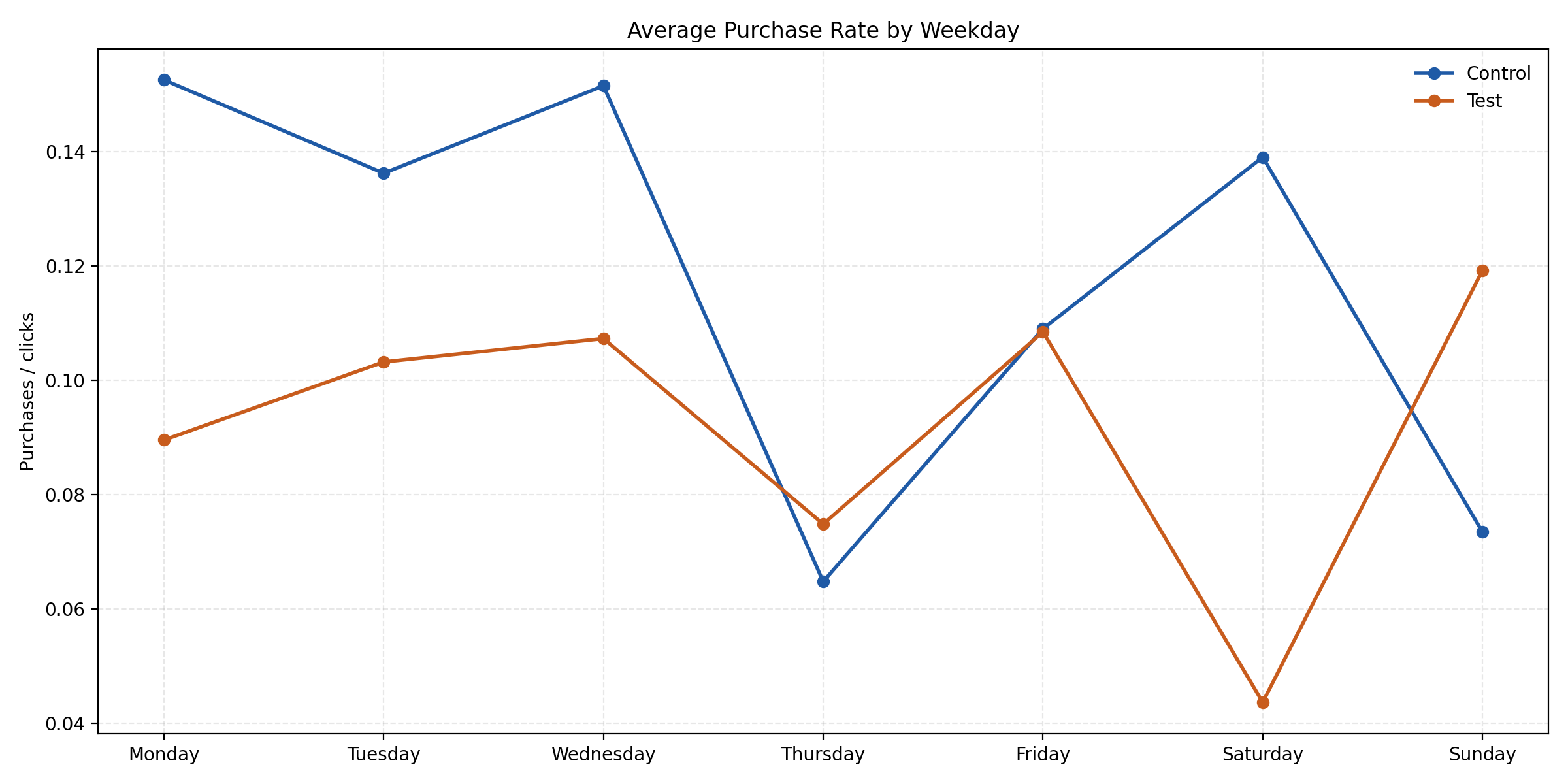

Additional diagnostic views help separate stable evidence from patterns that are useful but more exploratory. The weekday chart below is a good example: there are visible differences, but none of the weekday-specific purchase-rate gaps remain statistically significant after multiple-testing correction. That means the shape is informative, but it should not be overclaimed.

Recommended interpretation for the business

The test should not be declared a universal winner

The extra traffic is real, but the test should not replace the control outright if the business optimizes for efficient purchase behavior rather than raw engagement.

The top-of-funnel strength is worth preserving

The click-generation advantage is meaningful. The next iteration should retain that strength while improving what happens after the click.

A follow-up test should target the landing-page and cart step

The evidence points to a handoff problem between ad engagement and commercial intent. That is the most promising place for the next experiment.

Future experiment reviews should stay multi-metric

This project demonstrates why decision-making should combine funnel metrics, cost metrics, and uncertainty measures instead of treating one KPI as the whole story.

Tools Used

The project was built in Python using Pandas and NumPy for data preparation and metric engineering, SciPy for inferential testing, and Matplotlib for the visual layer. The final analysis combines classical significance testing with resampling methods and Bayesian probability estimates to produce a decision-ready A/B testing workflow.